Metaverse is a concept primary used in tech and media industries but also by journalists. It is fair to say that nobody really knows what it is or how it will be.

For many, metaverse is the second coming of the Second Life (2003), a 3D virtual world with avatars to hangout and talk with others and to build the world, when some refers to the next generation multi-user online games, such as Roblox (2006), Minecraft (2011) and Fortnite (2017). Common for all these are, that as they are platforms for playing game(s), they are same time places to hangout and talk with others. This way we may call them 3D social media with avatars. Metaverses like these already have a relatively long history.

In addition to the 3D virtual worlds, metaverse is often considered to be a service where the real and the virtual worlds are merged — like in Pokémon Go (2016), the world first commercially successful augmented reality (AR) game. When the developer of the Pokémon Go is currently building a platform for AR developers, it is fair to say that also this kind of metaverse is already here, just full of pokémon.

If we look at the research literacy, we may claim that metaverse is today everything that belongs to the area of mixed-reality, as defined by Milgram and Kishino in 1994.

The article What is mixed reality? by Speicher, Hall and Nebeling (2019) gives a good overview of the more recent research and discussion on the topic.

It could be sensible not to use the metaverse concept at all, but as it is discussed as the future of the Internet, I felt that I want to write something about it, too.

I also have published a research article where we were experimenting learning with mixed reality in mirror worlds. The mixed reality and mirror world are often considered to be a key part of metaverse and, not surprisingly, education — such as corporate onboarding and creative teamwork — has been presented as one area of applications. Therefore, I see that I may have something to say. At lease, I want to say something about the future of the Internet.

Firstly, when it comes to 3D virtual reality, there is very little discussion about the possibilities of using photogrammetry to create multiuser 3D virtual worlds. We humans leave in a rich audio-visual real world. For over two million years we have been learning to leave and act in our environment. We are hardwired by evolution to navigate in a real world, with real people, with real objects. Still, the current 3D virtual worlds are rather cartons that photorealistic replications of the real world.

I believe, that the future of the Internet is not in cartoon-like 3D virtual worlds, but in the replications of real world environments that are photorealistic.

This means that also the avatars in these worlds should be photorealistic. By being computer generated they naturally will still be editable with filters and other forms of image manipulation. This means that the starting point of creating an avatar will be a photorealistic 3D model of the user. The model will be made with photogrammetry that is then edited to be how the user likes it to be. The most important is to have a face that is recognisable by others, similar way as we recognise people from photos. I am convinced that even a rounded paper doll avatar with a face photo is better for social interaction than a cartoon-like avatar.

The first step, however, is to great the photorealistic 3D worlds — replicas of the real environments.

We are getting there. There are some interesting recent research on using photogrammetry to create 3D models of the real world to the Web. It is also interesting that this is not done in the tech companies of the Silicon Value but by the New York Times. Recently their R&D Lab published An End-to-End Guide to Photogrammetry with Mobile Devices and instructions on how to Delivering 3D Scenes to the Web. Furthermore they have released open-source code for people interested in to experiment with it.

As mentioned earlier, the AR is also considered to be an important part of metaverse. Although, it may at first look like totally different than the 3D metaverse, I think these could be integrated. If you think that the core of metaverse will be photorealistic replicas of the real world environments, it means that the data collected about these spaces can be easily brought also to the AR that is experiences in the actual location.

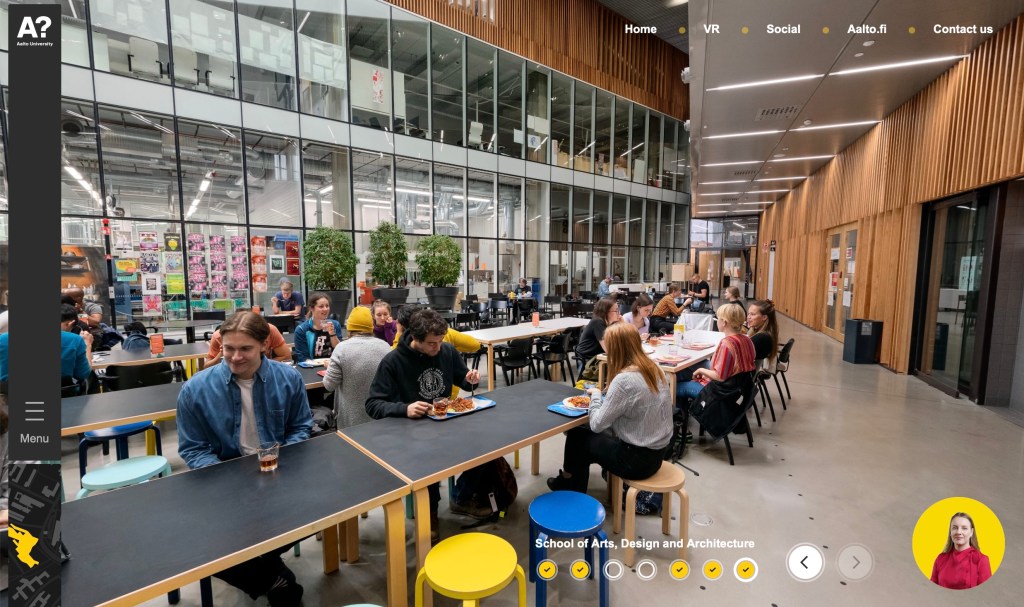

If you now take a look of the first image in this blog post you may imaging a use case where you are standing in the restaurant and looking for a way to find my research group‘s space in the same building. Because the whole building is modelled for the purpose of creating the photorealistic 3D world, also the information about our space is saved to the system. In the situation you could ask your phone “where is Teemu” and get audio instructions to find me, or you could use your phone to project little arrows to the floor guiding you to our office.

With this kind of integration, the Metaverse could be a space for user innovation just like the Web. People could develop such services as adding notes, audio and video clips or AI bots to the spaces, in the real world and in the replica of the space in the virtual world. This information would then be accessible and editable both in the real world and in the virtual replica of the same space. I am sure with this kind of open Metaverse platform you are able to imaging thousands of use cases and applications.

I know that there are challenging if the Metaverse is strongly linked to real spaces and environments. Therefore the 3D virtual world part of the Metaverse, should be open also to develop totally imaginary spaces and environments. The point is that the photorealistic part would be the main entry point for the people and then from it you could find ways to the imaginary 3D worlds.

When reading this you may have wondering who could build this kind of platform that is merging the open photorealistic 3D virtual world and the AR?

The answer is obvious. It must be build by us, just like the Web was and still is build by us. From large part the software pieces to build this kind of Metaverse are already open source. So we could start working on this direction already with our mobile phones with cameras, free software, open standards and the Web.

Mozilla Foundation and other non commercial web-players are really important in the development of the open Metaverse. The Mozilla is already developing the hubs, a multi-user virtual space in WebVR, and probably should be the universal Metaverse platform.

I assume that soon, in a less than 2 years, you can visit me in our photorealistic 3D office in our campus from your laptop. From there you will find me and my colleagues working as photorealistic avatars, good enough for you to recognise us. You will also enter the room as an avatar. If your avatar is a photorealistic replica of yourself, the door is open and we can discuss over audio. If your avatar is a dragon (or you do not have legs!) you must convince me a bit more to open the virtual door for you.